Evaluating a Behavioural Road Safety Intervention: The “Falling from a Height” Campaign

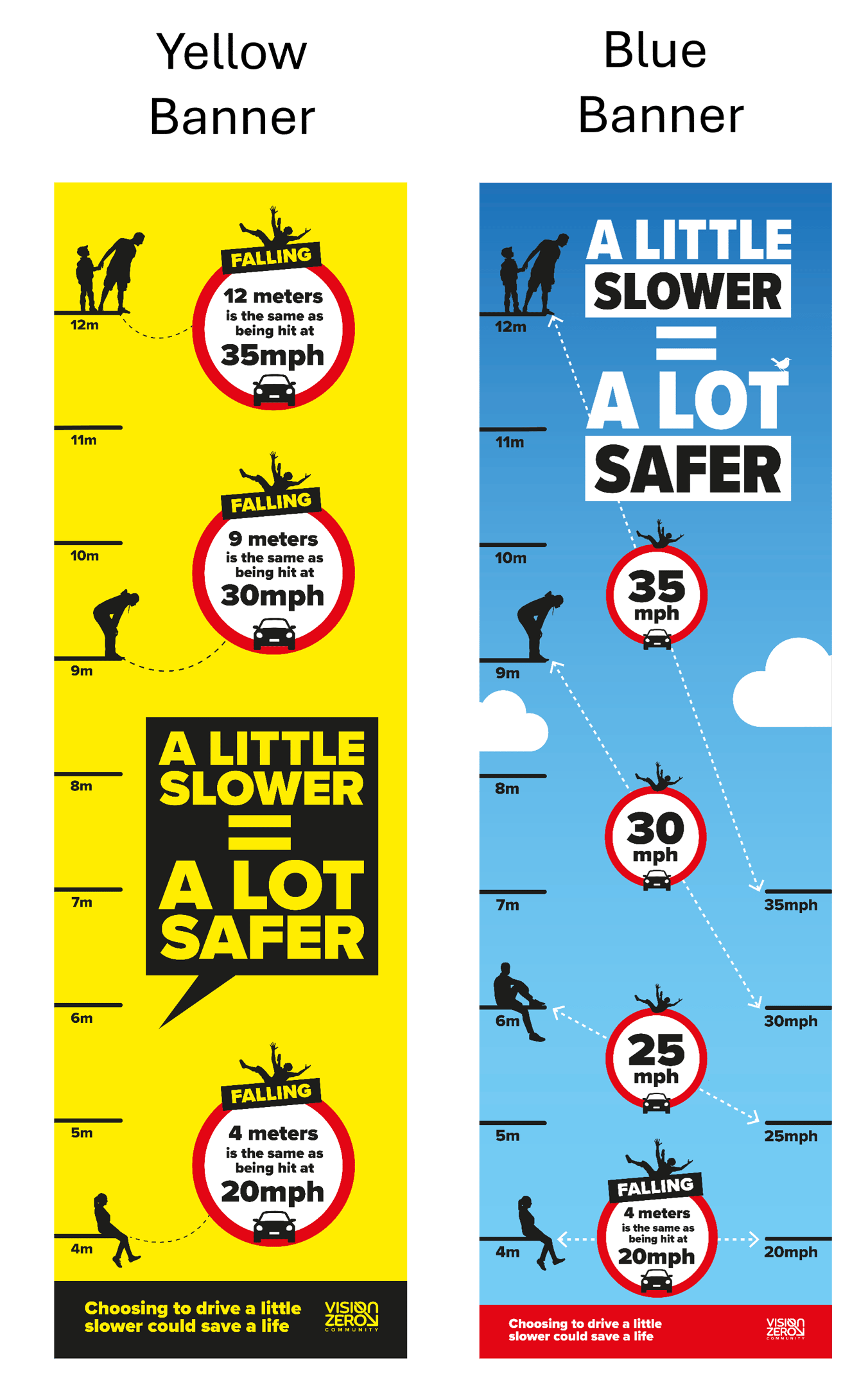

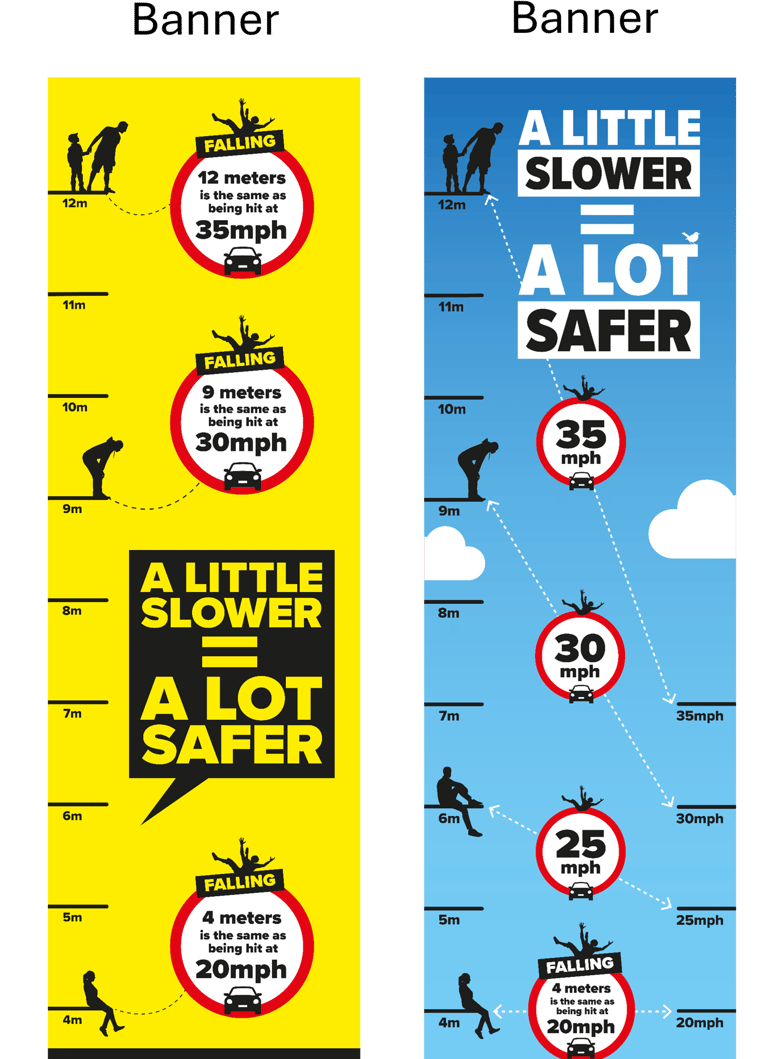

We evaluated a novel behavioural intervention to communicate the non-linear relationship between vehicle speed and injury severity. The campaign reframed speed in terms of an intuitive physical analogy: the equivalent height from which a pedestrian would fall. For example, being struck at 20 mph is roughly equivalent (in kinetic energy terms) to falling from around 4 metres, while 35 mph corresponds to a much greater height, illustrating how relatively small increases in speed can lead to disproportionately severe outcomes.

To test the effectiveness of this message, we implemented a controlled experimental design comparing alternative visual banner formats against a control condition. Participants were randomly assigned to conditions and completed pre- and post-measures assessing comprehension, perceived risk, and behavioural intentions. The aim was not simply to test whether the campaign “worked”, but to quantify how much it improved understanding and whether this change was meaningful for behaviour.

Designing for Sensitivity: Simulation-Based Power Analysis

A key challenge in evaluating behavioural interventions is that effects are often small but practically important. Standard sample size rules of thumb risk producing underpowered studies that fail to detect meaningful changes.

To address this, we conducted a simulation-based power analysis tailored to the exact study design and outcome measures. Rather than relying on generic assumptions, we simulated plausible effect sizes (e.g., a 5–10 percentage point improvement in correct understanding) under realistic data-generating conditions. This approach allowed us to:

Estimate the probability of detecting meaningful intervention effects

Ensure the study was appropriately powered for decision-making

Avoid wasted resources on under- or over-sized samples

This level of design precision is rarely applied in practice but is critical when interventions are being evaluated to inform policy or investment decisions.

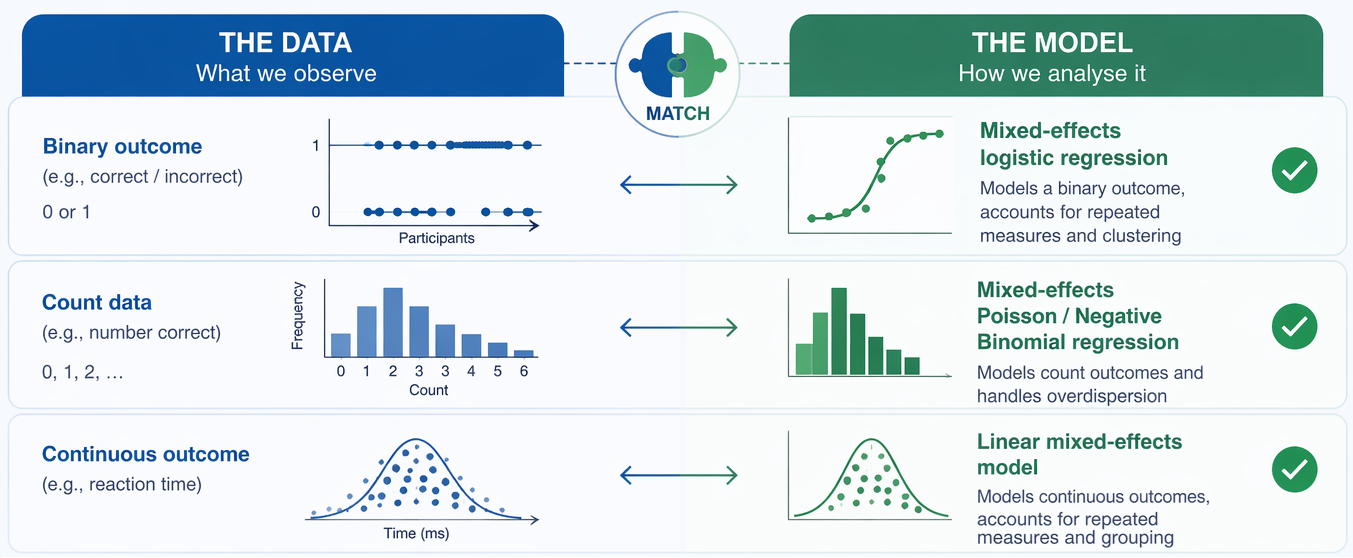

Analysing the Right Way: Models Aligned to the Data

Rather than defaulting to traditional methods such as ANOVA, which often assume continuous, normally distributed outcomes, we selected statistical models aligned to the structure of the data.

For example, binary outcomes (e.g., correct vs incorrect understanding) were analysed using mixed-effects logistic regression, accounting for repeated measures within individuals and variation across items. This approach:

Properly reflects the measurement scale of the data

Improves precision by modelling within-person change

Allows for more realistic inference about intervention effects

By aligning the analysis to the data-generating process, the results are both more accurate and more interpretable for decision-makers.

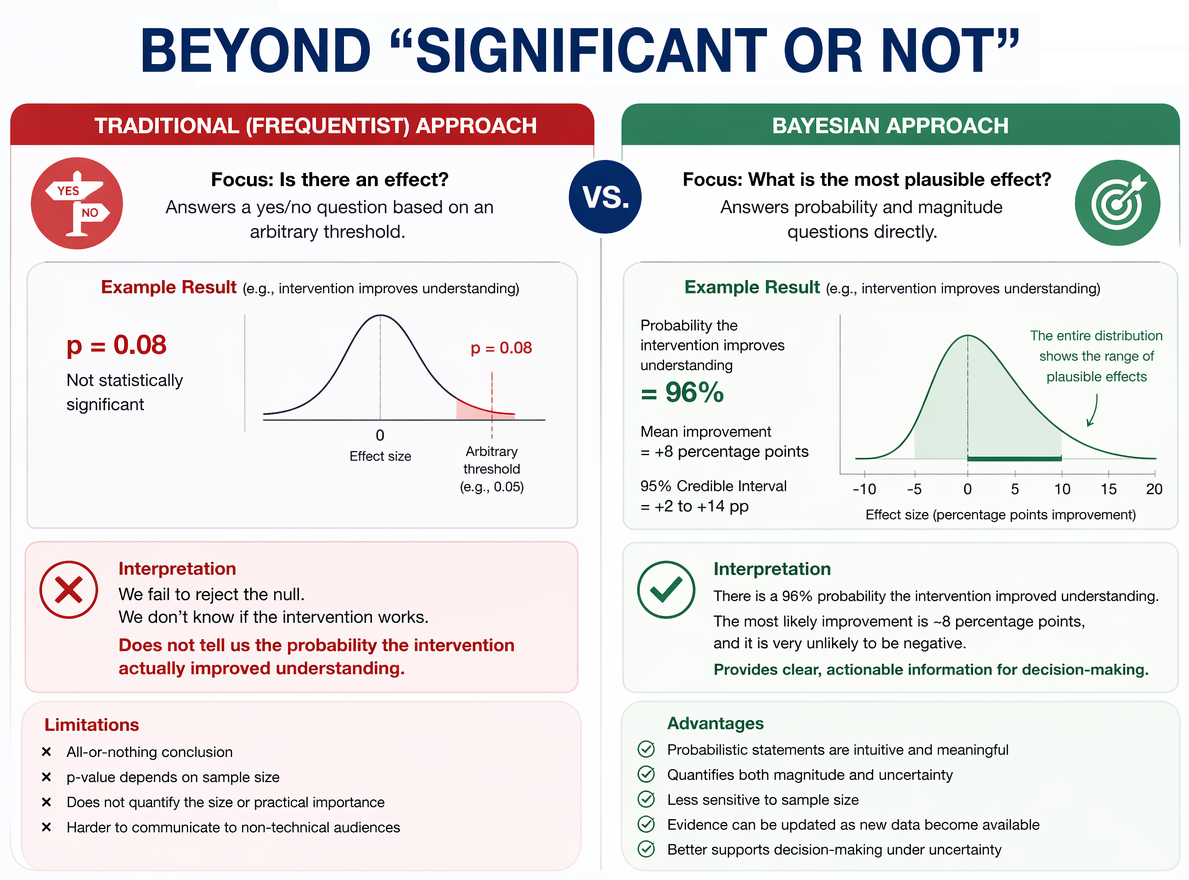

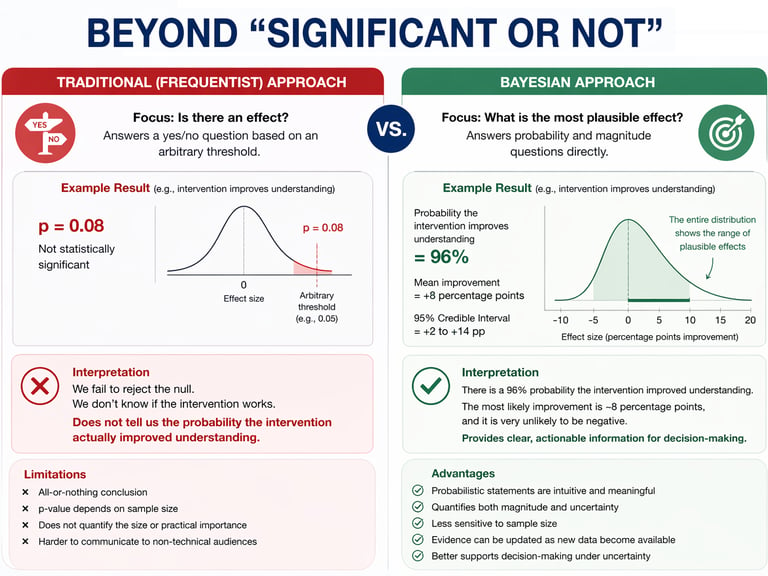

Moving Beyond “Significant or Not”: A Bayesian Approach

A central feature of our evaluation approach is the use of Bayesian statistical modelling, which provides a more informative and decision-relevant alternative to traditional frequentist methods.

Instead of asking whether an effect is “statistically significant”, the Bayesian framework answers questions such as:

What is the probability that the intervention improved understanding?

How large is the improvement likely to be?

How confident can we be that the effect is practically meaningful?

For this evaluation, we estimated the posterior distribution of the intervention effect, allowing us to quantify the probability that the campaign increased correct responses beyond a meaningful threshold (e.g., ≥95% probability of a positive effect). This provides a far clearer basis for decision-making than a binary p-value.

Bayesian methods are particularly valuable in behavioural interventions where:

Effects are modest but cumulative

Decisions must be made under uncertainty

Evidence needs to be communicated clearly to non-technical stakeholders

Insight and Impact

Transforming research into actionable solutions.

Email: jonathan.rolison@outlook.com

© 2025. All rights reserved.

Contact